Proxmox ZFS vs. LVM vs. Ceph: Storage Decision

| Categories: | credativ® Inside Proxmox |

|---|---|

| Tags: | Ceph LVM proxmox Proxmox VE ZFS |

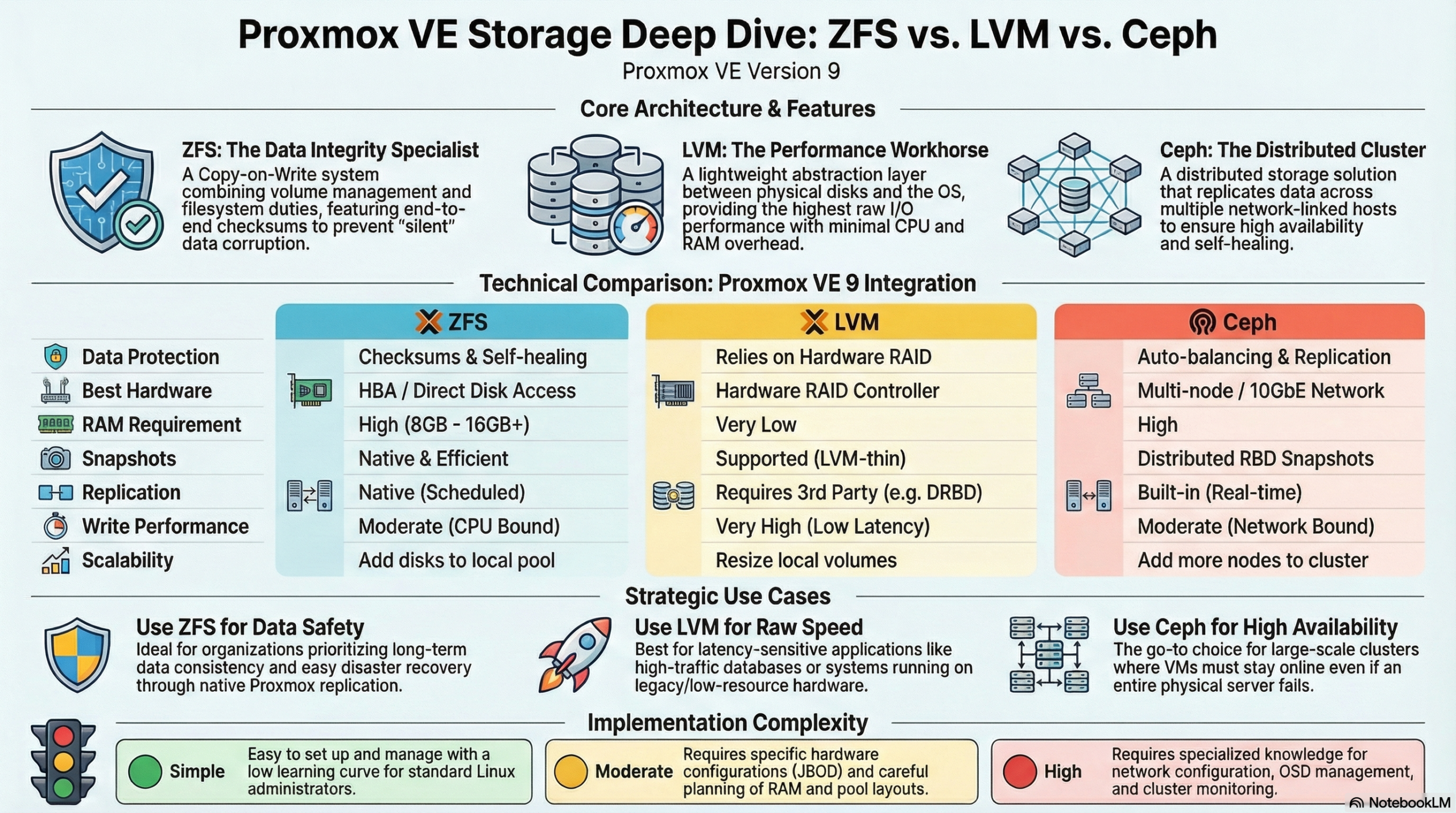

The choice between ZFS, LVM, and Ceph in Proxmox depends on your specific requirements. ZFS offers integrated data redundancy and snapshots for local systems, LVM enables flexible volume management with high performance, while Ceph provides distributed storage solutions for cluster environments. Each technology has different strengths in terms of performance, scalability, and maintenance effort.

What is the difference between ZFS, LVM, and Ceph in Proxmox?

ZFS is a copy-on-write file system with integrated volume management and data redundancy. It combines a file system and volume manager into one solution and offers features such as snapshots, compression, and automatic error correction. ZFS is particularly suitable for local storage scenarios with high data integrity requirements.

LVM (Logical Volume Manager) works as an abstraction layer between physical disks and the file system. It enables flexible partitioning and dynamic volume resizing at runtime. LVM offers high performance and easy management, but requires additional redundancy mechanisms such as software RAID.

Ceph represents a fully distributed storage architecture that replicates data across multiple nodes. It provides object, block, and file storage in a single system and scales horizontally. Ceph is suitable for large cluster environments with high availability requirements.

Which storage solution offers the best performance for different workloads?

LVM with ext4 or XFS delivers the highest performance for I/O-intensive applications such as databases. The low overhead makes it the first choice for latency-critical workloads. ZFS follows with good performance alongside data integrity features, while Ceph exhibits higher latency due to network overhead.

For database workloads, LVM with fast SSDs and direct access is recommended. The minimal abstraction layer reduces latency and maximizes IOPS. ZFS can offer competitive performance here through ARC cache and L2ARC acceleration, especially for read-heavy workloads.

File services benefit from ZFS features such as deduplication and compression, which save storage space.

Ceph is suitable for distributed file services with high availability requirements, even if performance is limited by network communication. Here, virtual machines can be migrated from host to host with virtually no delay, whether using a tool like ProxLB or in the event of a failover.

Virtual machines run well on all three systems. LVM offers the best raw performance, ZFS enables efficient VM snapshots, and Ceph provides live migration between hosts without shared storage.

| Feature | LVM | ZFS | Ceph |

|---|---|---|---|

| Architecture Type | Local (Block Storage) | Local (File System & Volume Manager) | Distributed (Object/Block/File) |

| Performance (Latency) | Excellent (Minimal Overhead) | Good (Scales with RAM/ARC) | Moderate (Network Dependent) |

| Snapshots | Yes | Yes (very efficient) | Yes |

| Data Integrity | Limited (RAID-dependent) | Excellent (Checksumming) | Excellent (Checksumming) |

| Scalability | Limited (Single Node) | Medium (within host) | Very high (Horizontal in cluster) |

| Network Requirements | Standard (1 GbE sufficient) | Standard (1 GbE sufficient) | High (min. 10-25 GbE recommended) |

| Main Application Area | Maximum single-node performance | High data security & local speed | Enterprise cluster & high availability |

| Complexity | Simple | Moderate | High |

How do you decide between local and distributed storage architecture?

Local storage solutions such as ZFS and LVM are suitable for single-host environments or when maximum performance is more important than high availability. Distributed systems like Ceph are necessary when data must be available across multiple hosts or when automatic failover mechanisms are required.

Infrastructure size plays a decisive role. Individual Proxmox hosts or small setups with two to three servers work well with local storage solutions. From three to four hosts, Ceph becomes interesting as it enables true high availability without a single point of failure. Ceph requires a quorum, so an odd number of nodes is always required for Ceph. Cluster setups with an even number are therefore always subject to some variation in usage – but manual adjustments are also required in Proxmox VE here.

Network requirements differ significantly. Local storage systems only require a standard network for management, while Ceph requires dedicated 10GbE connections for optimal performance. Today, for certain performance needs, 25GbE connections are preferred for the data load. Ideally, these connections are available exclusively to the Ceph system and are in addition to the virtualization requirements. The network infrastructure therefore significantly influences the storage decision.

Maintenance effort and complexity increase with distributed systems. ZFS and LVM are easier to understand and maintain, while Ceph requires specialized knowledge for configuration, monitoring, and troubleshooting.

Proxmox VE with ZFS offers a middle ground between true shared storage and local data storage with pe-sync. This allows hosts to be kept in sync automatically. However, this is not synchronous but occurs at specific intervals, such as every 15 minutes. For certain workloads, this can be perfectly sufficient.

What are the most important factors in Proxmox storage planning?

Hardware requirements vary greatly between storage technologies. ZFS requires sufficient RAM (1 GB per TB of storage) so that the integrated ARC cache can reach its full performance, LVM runs on minimal hardware, and Ceph requires dedicated network hardware, as well as sufficient RAM and multiple hosts. Hardware equipment often determines the available storage options.

Backup strategies must match the chosen storage solution. ZFS snapshots enable efficient incremental backups, LVM snapshots offer similar functionality, while Ceph backups are implemented via RBD snapshots or external tools. Backup requirements significantly influence the choice of storage.

Scalability planning should take future growth into account. LVM allows for easy volume expansion, ZFS pools can be expanded with additional drives, and Ceph scales by adding new hosts. The planned growth direction influences the optimal storage architecture.

Budget considerations include not only hardware costs but also maintenance effort and the required expertise. Simple LVM setups have low total costs, while Ceph clusters require higher investments in hardware and training.

Bonus: ZFS and Ceph both offer integrated checksumming procedures that actively help against so-called "bit rot" – creeping data corruption – and can automatically detect and correct it through redundancy. LVM without additional layers like a RAID layer does not allow for this.

How credativ® supports Proxmox storage optimization

credativ® offers comprehensive consulting and implementation for optimal Proxmox storage decisions based on your specific requirements. Our open-source experts analyze your workloads, infrastructure, and growth plans to recommend the ideal storage architecture.

Our services include:

- Detailed storage architecture assessment and technology selection

- Professional implementation and configuration of ZFS, LVM, or Ceph

- Performance optimization and monitoring setup for selected storage solutions

- 24/7 support and maintenance for production Proxmox environments

- Training for your IT team on storage management and best practices

With over 25 years of experience in the open-source sector and direct access to our permanent Linux specialists, you receive professional Proxmox support without going through call centers. Contact us for a personalized consultation on your Proxmox storage strategy and benefit from our proven enterprise support.

| Categories: | credativ® Inside Proxmox |

|---|---|

| Tags: | Ceph LVM proxmox Proxmox VE ZFS |

About the author

Peter Dreuw

Head of Sales & Marketing

about the person

Peter Dreuw arbeitet seit 2016 für die credativ GmbH und ist seit 2017 Teamleiter. Seit 2021 ist er Teil des Management-Teams als VP Services der Instaclustr. Mit der Übernahme durch die NetApp wurde seine neue Rolle "Senior Manager Open Source Professional Services". Im Rahmen der Ausgründung wurde er Mitglied der Geschäftsleitung als Prokurist. Sein Aufgabenfeld ist die Leitung des Vertriebs und des Marketings. Er ist Linux-Nutzer der ersten Stunden und betreibt Linux-Systeme seit Kernel 0.97. Trotz umfangreicher Erfahrung im operativen Bereich ist er leidenschaftlicher Softwareentwickler und kennt sich auch mit hardwarenahen Systemen gut aus.